Procedural Genration of Portal2 levels using DCGANs

Group 23 report:

Original Goal

To create a DCGAN algorithm that is trained on a large level corpus to produce simple working levels with interesting structures.

Why Deep Convolutional Generative Adversarial Networks (DCGANs)?

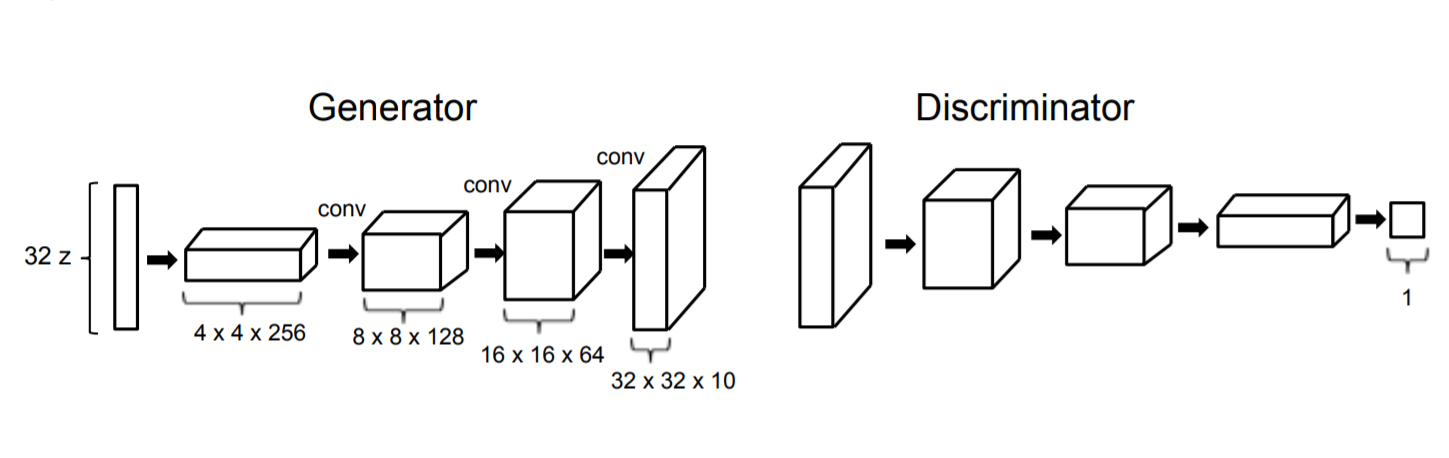

GAN architecture was first developed in 2014 in a paper titled "Generative Adversarial Networks" by Ian Goodfellow et al. A more structured approach called Deep Convolutional Generative Adversarial Network (DCGAN)

was later developed by Alec Radford et al. in 2015 in the paper titled "Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks". The architecture is useful in exploring the latent

space of a distribution and produce novel example that lie in that same distribution. DCGANs have been very succesful in generative applications.

Assuming that level structures also form a cohorent distribution in the latent space, DCGANs should be able to approximate this distribution to create new levels.

This has been shown to work succefully on 2D games like Mario.

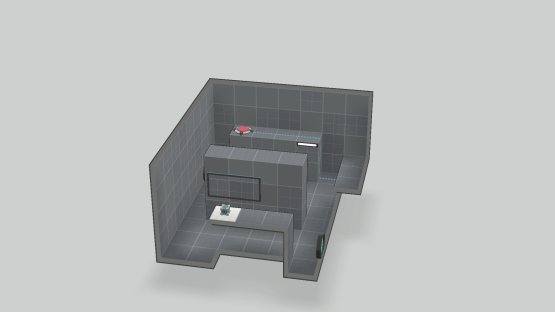

Data

The Portal 2 has a community workshop where players can upload mods, visual enhancements and new levels for the game. The workshop has a very large corpus of levels for the game. This is one of the reasons why this game

was considered to be ideal for this project.

However, the translation of the levels available on the workshop to a usable form proved too difficult, instead a new, much smaller corpus was created by hand. To increase the effectiveness of a smaller corpus, the dataset

included rotations and reflections of the levels, resulting in 8 data points for each working level in the corpus.

While the disadvantages of a smaller corpus are obvious, it lets us work on simple levels with homogenous size, making it easier for us to come up with a proof of concept.

The Model

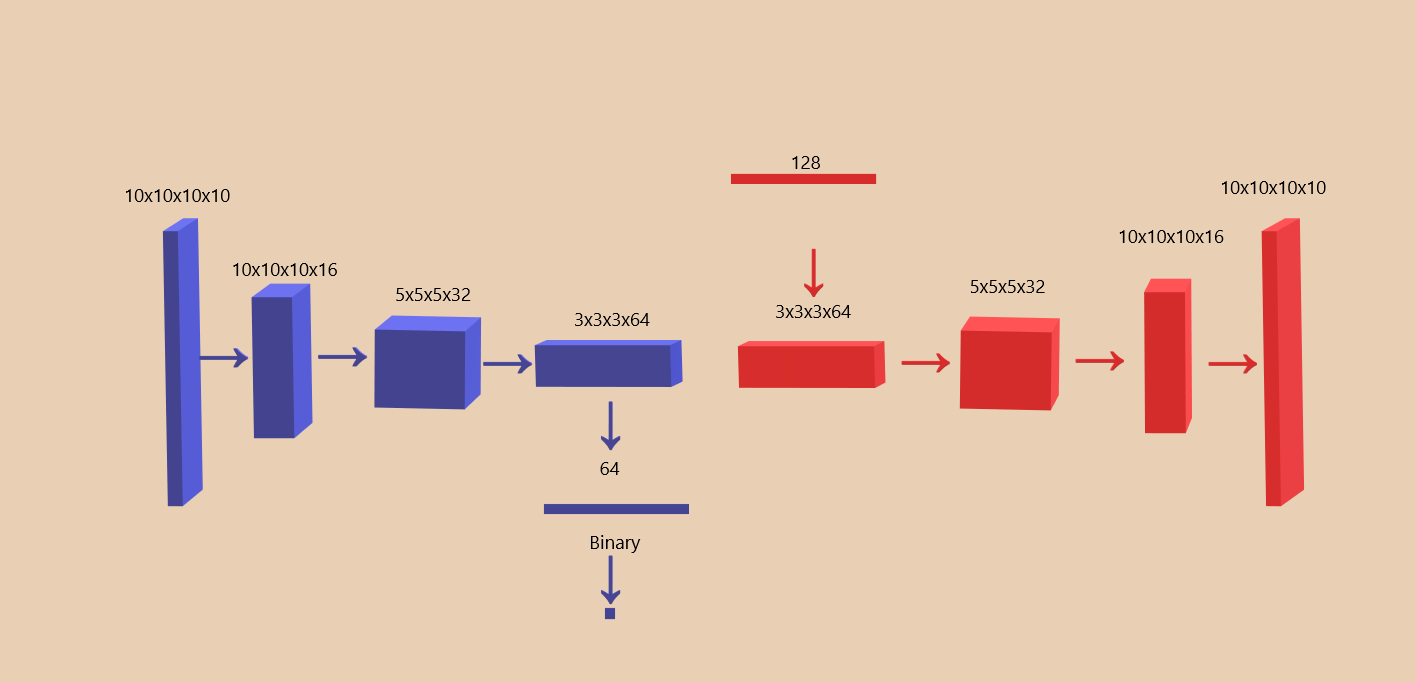

The discriminator and generator parts of the model mirror each others' structure. The Convolution layers create abstract representaions of the input layer that condense down to a binary value in the discriminator.

In the generator a similar series of transposed convolutions is used to take a 128 dimension latent vector and create a (10,10,10,10) output level.

This model is updated from our milestone presentation.

excessive padding

We have reduced level sizes from 16x16x16 to 10x10x10 to reduce padding, excessive padding can be an issue as it just increases parameter size without any extra information with 16 cubic levels we had upto 80% padding for some levels. A 10 cubed limit on level size vastly reduces padding percentage.

Deeper Network

The network was made deeper to encourage better characteristics in generated level.

Following the reference architecture, as we reduce the number of dimensions in the layer we increase the number of channels.

Layer (type) Output Shape Param # ================================================================= conv3d_3 (Conv3D) (None, 10, 10, 10, 16) 4336 _________________________________________________________________ leaky_re_lu_15 (LeakyReLU) (None, 10, 10, 10, 16) 0 _________________________________________________________________ conv3d_4 (Conv3D) (None, 5, 5, 5, 32) 13856 _________________________________________________________________ leaky_re_lu_16 (LeakyReLU) (None, 5, 5, 5, 32) 0 _________________________________________________________________ conv3d_5 (Conv3D) (None, 3, 3, 3, 64) 55360 _________________________________________________________________ leaky_re_lu_17 (LeakyReLU) (None, 3, 3, 3, 64) 0 _________________________________________________________________ global_max_pooling3d_1 (Glob (None, 64) 0 _________________________________________________________________ dense_5 (Dense) (None, 1) 65 ================================================================= Total params: 73,617 Trainable params: 73,617 Non-trainable params: 0 _________________________________________________________________

Model: "generator" Layer (type) Output Shape Param # ================================================================= dense_6 (Dense) (None, 1728) 222912 _________________________________________________________________ leaky_re_lu_18 (LeakyReLU) (None, 1728) 0 _________________________________________________________________ reshape_4 (Reshape) (None, 3, 3, 3, 64) 0 _________________________________________________________________ conv3d_transpose_12 (Conv3DT (None, 6, 6, 6, 32) 131104 _________________________________________________________________ cropping3d_2 (Cropping3D) (None, 5, 5, 5, 32) 0 _________________________________________________________________ leaky_re_lu_19 (LeakyReLU) (None, 5, 5, 5, 32) 0 _________________________________________________________________ conv3d_transpose_13 (Conv3DT (None, 10, 10, 10, 16) 32784 _________________________________________________________________ leaky_re_lu_20 (LeakyReLU) (None, 10, 10, 10, 16) 0 _________________________________________________________________ conv3d_transpose_14 (Conv3DT (None, 10, 10, 10, 10) 10250 ================================================================= Total params: 397,050 Trainable params: 397,050 Non-trainable params: 0 _________________________________________________________________

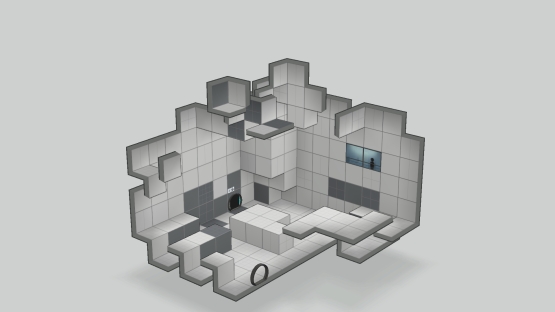

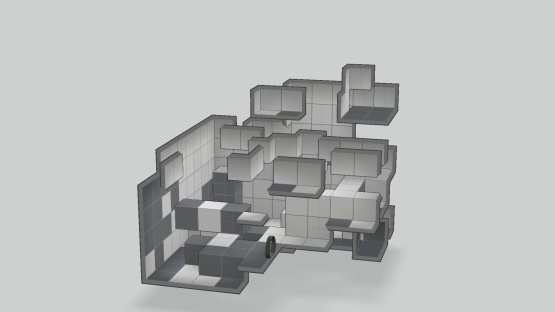

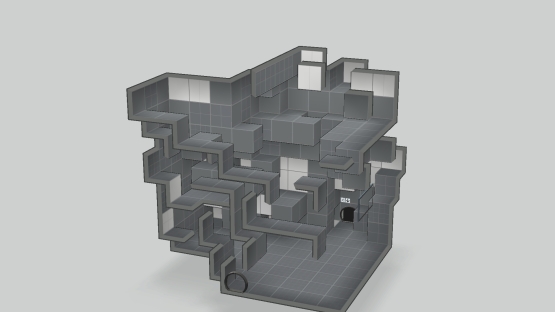

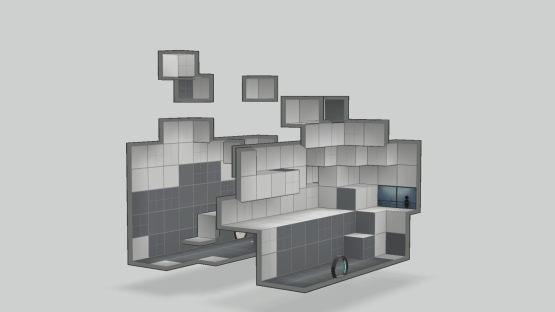

Results.

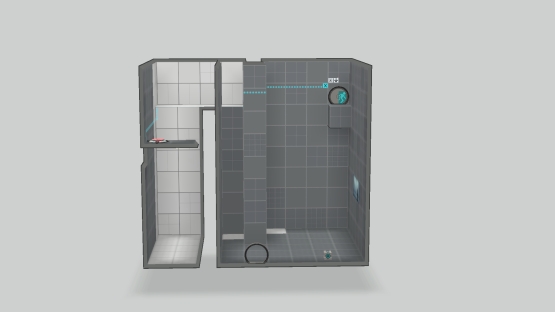

This last level is the most interesting level so far. The level created two separate chambers connected by a small passage. Such a structure could make for a very interesting puzzle design. There is actually a level in the training set set that has a similar characteristic.

But the orientation of the level, the size of the chambers and the location of the passage is very different. Which means that the algorithm is capable of producing some good results but in it's current state the number of bad noisy results is much greater than good results.

Current problems

Buggy Item Placement

The item placement to create the actual puzzle is very flawed. Items appear inside solid walls, there are multiple entry and exit doors. The hope was that the algorithm will be able to learn that each level has only one entry door

which must be placed on a flat wall. None of the versions of the algorithm produced sensible item placement, this was a concern in the Mario DCGAN paper as well. The powerups in a mario level are rare and very scattered and are almost

seen as outliers by the algorithm. To get around this issue a convolution was applied to all such elements of the game, to improve their significance during training. This solution can be explored in this case aswell.

Another suggestion is to divide the level generation into 3 parts.

- Geometry generation

- Item placement

- Connections

Lack of Data

The lack of data points is an obvious problem. We attempted to solve this by reaching out to the community and asking for some level files. But, as we should have expected, for a 9 year old game, the community is not very active and the response was very underwhelming.

Conclusion

As we saw, the project shows promise but there is still a very long way to go, the original scope of the project was far too great. As this project went along the problem was reduced to just producing interesting level geometry in which a puzzle could be made.

Code

Link to the Github repositoryReferences:

- Volz et. al., “Evolving Mario Levels in the Latent Space of a Deep Convolutional Generative Adversarial Network”, in Proceedings of the Genetic and Evolutionary Computation Conference, pp. 221-228 (2018)

- Giacomello et. al., “DOOM Level Generation using Generative Adversarial Networks”, 2018 IEEE Games, Entertainment, Media Conference (GEM), pp. 316-323, (2018)

- Irfan et. al., “Evolving Levels for General Games Using Deep Convolutional Generative Adversarial Networks”, in 11th Computer Science and Electronic Engineering (CEEC), pp. 96-101 (2019)

- Juraska et. al., “ViGGO: A Video Game Corpus for Data-To-Text Generation in Open-Domain Conversation”, in Proceedings of the 12th International Conference on Natural Language Generation, pp. 164-172, (2019)

- How to Identify and Diagnose GAN Failure Modes

- Why Do GANs Need So Much Noise?

- DCGANs reference code